AI algorithms detect diabetic eye disease inconsistently

Although some artificial intelligence software tested reasonably well, only one met the performance of human screeners, researchers found.Media Contact: Bobbi Nodell - bnodell@uw.edu, 206.543.7129

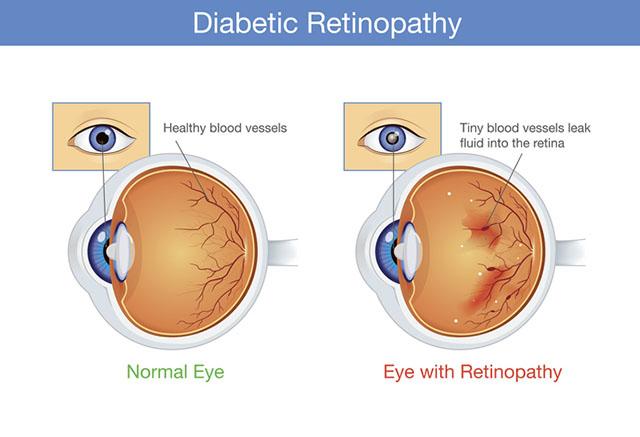

Diabetes continues to be the leading cause of new cases of blindness among adults in the United States. But the current shortage of eye-care providers would make it impossible to keep up with demand to provide the requisite annual screenings for this population. A new study looks at the effectiveness of seven artificial intelligence-based screening algorithms to diagnose diabetic retinopathy, the most common diabetic eye disease leading to vision loss.

In a paper published Jan. 5 in Diabetes Care, researchers compared the algorithms against the diagnostic expertise of retina specialists. Five companies produced the tested algorithms – two in the United States (Eyenuk, Retina-AI Health), one in China (Airdoc), one in Portugal (Retmarker), and one in France (OphtAI).

The researchers deployed the algorithm-based technologies on retinal images from nearly 24,000 veterans who sought diabetic retinopathy screening at the Veterans Affairs Puget Sound Health Care System and the Atlanta VA Health Care System from 2006 to 2018.

The researchers found that the algorithms don’t perform as well as they claim. Many of these companies are reporting excellent results in clinical studies. But their performance in a real-world setting was unknown. Researchers conducted a test in which the performance of each algorithm and the performance of the human screeners who work in the VA teleretinal screening system were all compared to the diagnoses that expert ophthalmologists gave when looking at the same images. Three of the algorithms performed reasonably well when compared to the physicians’ diagnoses and one did worse. But only one algorithm performed as well as the human screeners in the test.

“It’s alarming that some of these algorithms are not performing consistently since they are being used somewhere in the world," said lead researcher Aaron Lee, assistant professor of ophthalmology at the University of Washington School of Medicine.

Differences in camera equipment and technique might be one explanation. Researchers said their trial shows how important it is for any practice that wants to use an AI screener to test it first and to follow the guidelines about how to properly obtain images of patients’ eyes, because the algorithms are designed to work with a minimum quality of images.

The study also found that the algorithms’ performance varied when analyzing images from patient populations in Seattle and Atlanta care settings. This was a surprising result and may indicate that the algorithms need to be trained with a wider variety of images.

This study was supported by NIH/NEI K23EY029246, R01AG060942 and an unrestricted grant from Research to Prevent Blindness.

For details about UW Medicine, please see our About page.

Topics:diabetes